Introduction

In the age of AI agents, the Product Owner’s role is not diminishing—it’s evolving into something far more strategic. As AI capabilities advance, the critical question is no longer “What can the model do?” but rather “How do we architect the systems that channel AI capabilities into safe, valuable, and measurable outcomes?”

This is where the concept of a harness becomes essential.

As a product owner, you can think of a harness as “everything that turns a raw model into a shippable, usable product for a specific job.” Same brain (model), different harness → different product/feature.

The harness encompasses:

- Tools the AI can access (APIs, databases, browsers, documents)

- Orchestration patterns that define workflows and decision-making

- Guardrails that establish boundaries, permissions, and safety constraints

- UX surfaces that make the AI’s agency visible and controllable

This article maps the harness concept directly to product owner concerns: scope, requirements, UX, risks, and value. You’ll learn how to think architecturally about AI agents—not as magic boxes, but as systems you design, constrain, and govern.

By the end, you’ll understand how to translate product strategy into the design of AI agents, culminating in a practical tutorial for building an agentic harness for backlog prioritization.

Table of Contents

- Introduction

- Table of Contents

- 1. What a Harness Is in Product Terms

- 2. How This Shows Up in Real Product Work

- 3. What You Decide as a Product Owner

- 4. Concrete Analogy: Claude Code / Cowork

- 5. How to Use This Concept in Your Day-to-Day PO Work

- 6. Agentic Harness Example: Prioritizing Backlog

- 7. Tutorial: Building an Agentic Harness for Backlog Prioritization

- 8. Conclusion: The PO as the Architect of Agency

- 9. GitHub Project

1. What a Harness Is in Product Terms

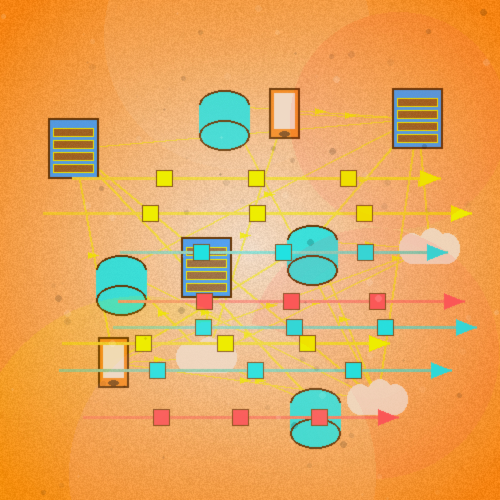

From a product perspective, a harness is the combination of:

Tools the model can use browser, Discord, emails, APIs, spreadsheets, calendars, CRMs, presentations, docs, etc.

Orchestration / workflows: How the system plans, decomposes tasks, chooses tools, loops, and decides when it’s “done.”

Guardrails / policies: permissions, boundaries, safety constraints, rate limits, escalation rules.

UX surface: The way users see and steer the agent: chat, forms, dashboards, “Run workflow” buttons, notifications, logs.

When you design a feature using AI, you are really designing a harness around a model for a particular context of use.

2. How This Shows Up in Real Product Work

Imagine three products, all using the same frontier model:

- Simple chatbot in your app

- Harness is almost trivial: one input box → model → one response.

- Product-wise: low power, low complexity, mostly “assistant that talks.”

- Research assistant inside your product

-

Harness now includes:

- Web search + citation tools

- Long-context retrieval from customer docs

- A planner that breaks a research request into sub-tasks

- A result format (e.g., brief + appendix + sources)

-

Product-wise: a feature that does a research task end to end (e.g., “Draft a competitive analysis about X”).

- Full agent for operations

-

Harness includes:

- Access to internal APIs (tickets, billing, CRM, ops tools)

- Permissions per role (what this agent may change)

- A workflow engine (plan → act → observe → revise)

- Human-in-the-loop checkpoints

-

Product-wise: this is no longer “chat,” but an autonomous workflow executor embedded in your product.

All three may call the same model, but you, as PO, are specifying three different harnesses.

3. What You Decide as a Product Owner

When you design “AI in the product,” the PO decisions are mostly harness decisions:

- What job-to-be-done the harness serves

- “Help users write emails faster” vs “reconcile invoices” vs “debug code” leads to completely different toolsets and workflows.

- Which tools the agent gets

- Can it read/write files?

- Can it call your internal APIs? Which ones?

- Can it send emails / issue refunds / change settings? Each “yes” is a product and risk decision, not just a technical one.

- How the agent plans and executes

- Does it use a planner to break down tasks, or just respond once?

- Does it loop until success, or do exactly N steps?

- What counts as “done” from a user and business perspective?

- Human-in-the-loop design

- Where are explicit approval gates?

- What actions must a human confirm (e.g., sending external emails, financial operations)?

- How do you present diffs, suggestions, or drafts for review?

- UX for steering and trust

- How does the user give a task? Free-form prompt, structured form, RFP-like template?

- How does the agent expose its plan? (“Here’s what I’ll do, step by step.”)

- How do you surface logs, errors, and “why did you do this?”

- Safety, permissions, and observability

- Which user roles unlock which agent powers.

- Audit logs for actions taken by the agent.

- Rollback options (undo, revert config changes, etc

In other words, you own: “What this harness is allowed to do, for whom, how it behaves, and how safe it is.”

4. Concrete Analogy: Claude Code / Cowork

Take Claude Code as a concrete harness:

- Tools: virtual machine, terminal, browser, file system.

- Workflows: clone repo → analyze → propose changes → modify files → run tests → iterate.

- UX: chat + file tree + terminal view; the user sees what it’s doing and can intervene.

- Guardrails: runs in a sandbox, cannot touch your real machine directly.

If you were PO for Claude Code, your backlog would be full of harness stories:

- “As a developer, I want the agent to run the test suite automatically after code changes.”

- “As a user, I want to see a diff of all files before they’re applied.”

- “As an admin, I want to restrict which repos the agent can access.”

Notice: none of these are “make the model smarter.” They’re “shape the harness better.

Claude Cowork is the same pattern, but for knowledge work on the desktop: new tools (local files, browser), new workflows (organize PDFs, extract tables, build a summary), new guardrails (VM, default-deny network). Different product, same philosophical object: a harness for a model.

5. How to Use This Concept in Your Day-to-Day PO Work

If you internalize “harness” you can structure your thinking like this:

-

Don’t ask: “What can the model do?”

Ask: “What harness do we need to make this job safe, reliable, and valuable?” -

For any AI feature idea, write spec sections explicitly as:

- “Tools available to the agent”

- “Allowed actions”

- “Workflow / plan pattern”

- “Human confirmation points”

- “UX for plan, progress, and result”

- “Audit / metrics”

When stakeholders say “Let’s integrate GPT,” translate to: “We’re choosing a model; now we must design the harness and UX that make it a product.”

The Situated Agency of the PO

This is where your background and attention to circumstances actually helps: you are deciding the situated agency of the system—its capabilities, its norms, and its responsibilities inside a socio-technical environment.

6. Agentic Harness Example: Prioritizing Backlog

Let’s take the workflow “prioritizing backlog” responsibility of a owner—and sketch how an agentic harness would look from a PO’s perspective:

1. Job-to-be-Done

- “Help the PO prioritize the product backlog based on business value, technical feasibility, and strategic alignment.”

2. Tools the Agent Can Use

- Access to backlog items (user stories, bugs, features) in Jira/Notion/etc.

- Business strategy documents

- Team capacity calendars

- Metrics dashboards (user engagement, revenue, NPS, etc.)

- Access to stakeholders for async feedback (via comments/emails)

3. Orchestration / Workflow

- Daily or weekly scan for new/updated backlog items

- Fetch and summarize key context for each item (e.g., dependencies, estimated effort, user pain)

- Group items by themes/epics

- Score/rank items using a predefined multi-criteria model (value, effort, risk, urgency)

- Surface conflicts or misalignments with current strategy

- Flag items needing human decision or stakeholder input

- Present a draft “prioritized backlog” for PO validation

4. Guardrails / Policies

- Read-only access to production data

- Suggest, never auto-commit backlog changes

- Only send notifications after PO sign-off

- Keep logs of prioritization rationale for audit

5. UX Surface

- Dashboard with prioritized backlog list, rationale for rankings, what’s new/changed

- “Why?” explainer buttons for each decision

- “Override” options so the PO remains the ultimate agent of decision-making

- Audit trail of all agent actions and suggestions

Philosophical Note

- In this architecture, the agency of the AI agent is deliberately “situated”: it operates under the conditions, circumstances, priorities and constraints of the real context defined by that PO and organization.

- The AI structures, rationalizes, and presents, but it does not replace the authentic praxis of the PO and his constantly judgment over the reality.

Visual Summary:

| Element | Harness for Prioritization |

|---|---|

| Tools | Jira, strategy docs, metrics |

| Orchestration | Scan backlog → Contextualize → Rank |

| Guardrails | Only suggests, detailed logs |

| UX | Interactive dashboard with explanations |

| AI’s Role | Structure; decision remains human |

7. Tutorial: Building an Agentic Harness for Backlog Prioritization

Goal:

Let’s see how we can automate backlog prioritization with AI suggestions, but keep human ownership—combining workflow automation, traceability, and human-in-the-loop decision-making.

7.1. Set Up Your VSCode Environment

7.1.1. Install Essential Extensions

- Jira/Atlassian Extension (if your backlog is in Jira): Atlassian VSCode

- REST Client: REST Client – run API calls from VSCode

- Markdown Preview Enhanced: Markdown Preview Enhanced – for clear review of outputs

7.1.2. Prepare Your Tools

- Your backlog data (exported as CSV/JSON, or via API)

- An account on OpenAI, Anthropic, or another LLM provider (or a local model)

- Python (recommended for scripting, but Node.js also works)

7.2. Fetch Your Backlog Data

- Option 1: Export your ticket list from Jira/Notion as CSV/JSON.

- Option 2: Use REST Client and the appropriate API to pull backlog items directly into VSCode.

7.3. Write an Automation Script (Python Example)

Here’s a minimal script to process backlog items and get AI-generated prioritization rationales oriented by harnessing.

Simplified Harness Example for Python Automation

| Element | Description |

|---|---|

| Tools | Local JSON/CSV file with backlog items, OpenAI API, terminal |

| Orchestration | Load items → Send to LLM → Get ranked list → Save output |

| Guardrails | Read-only input, output is a suggestion file, no auto-commit |

| UX | Markdown file with ranked backlog + rationale |

| AI’s Role | Rank and explain; human reviews and decides |

7.3.1. Prepare your backlog file (backlog.json)

[

{

"id": 1,

"title": "Add dark mode",

"effort": "low",

"value": "high",

"type": "feature"

},

{

"id": 2,

"title": "Fix login timeout bug",

"effort": "medium",

"value": "high",

"type": "bug"

},

{

"id": 3,

"title": "Refactor payment API",

"effort": "high",

"value": "medium",

"type": "tech-debt"

},

{

"id": 4,

"title": "Build onboarding tutorial",

"effort": "medium",

"value": "high",

"type": "feature"

}

]

b. Python script (prioritize.py)

import json

import openai

# 1. LOAD backlog (Tool: local JSON file)

with open("backlog.json", "r") as f:

backlog = json.load(f)

# 2. BUILD prompt (Orchestration: contextualize + rank)

prompt = f"""

You are a Product Owner assistant.

Given this backlog:

{json.dumps(backlog, indent=2)}

Rank these items by priority (highest first).

Consider: business value, effort, and urgency.

For each item, give a short rationale.

Output as a numbered markdown list.

"""

# 3. CALL LLM (Tool: OpenAI API)

client = openai.OpenAI()

response = client.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}]

)

result = response.choices[0].message.content

# 4. SAVE output (Guardrail: suggestion only, never auto-commit)

with open("prioritized_backlog.md", "w") as f:

f.write("# Prioritized Backlog (AI Suggestion)\n\n")

f.write(result)

f.write("\n\n---\n")

f.write("> ⚠️ This is a suggestion. Final decision belongs to the PO.\n")

print("Done! Review prioritized_backlog.md")

7.3.3. Run it

python prioritize.py

7.3.4. Example output (prioritized_backlog.md)

# Prioritized Backlog (AI Suggestion)

1. **Fix login timeout bug** — High value, blocks users. Urgent.

2. **Add dark mode** — High value, low effort. Quick win.

3. **Build onboarding tutorial** — High value, improves retention.

4. **Refactor payment API** — Medium value, high effort. Plan for next cycle.

---

> ⚠️ This is a suggestion. Final decision belongs to the PO.

7.4. How the Harness Connects

backlog.json ──→ prioritize.py ──→ OpenAI API ──→ prioritized_backlog.md

(Tool) (Orchestration) (Tool) (UX: Markdown output)

│

Guardrail: Suggestion only

Human: Reviews and decides

Philosophical Note

The script structures. The LLM ranks. But only you—the PO—authenticate the final priority.

7.5. Review & Override: The Human-in-the-Loop

- Check the

prioritized_backlog.mdwith Markdown Preview Enhanced in VSCode. - Accept, reject, or change the suggested order and rationales.

- Document any overrides or comments directly in the markdown file—making your decision process explicit (reflecting “situated agency”).

7.6. Repeat, Iterate, and Audit

- Whenever the backlog changes, fetch new data and rerun the script.

- Maintain all versions in your repo (with Git): this provides full traceability (audit trail) of both AI and human prioritization decisions.

8. Conclusion: The PO as the Architect of Agency

The age of AI agents does not diminish the Product Owner—it elevates the role.

Every AI agent, no matter how powerful, operates within a harness: a set of tools, workflows, guardrails, and interfaces that someone must design, configure, and govern. These people must be developers, QAs, UXs and mainly the product owner.

What We Covered

- Harness is the real product decision. The model is commodity; the harness is strategy.

- Job-to-be-done defines the harness. Writing emails, reconciling invoices, debugging code—each demands a unique architecture of tools and workflows.

- Tools go beyond code. Discord, emails, presentations, CRMs—anything that connects the agent to real work is part of the harness.

- Orchestration is the backbone. A clear workflow (scan → enrich → rank → flag → present → iterate) turns raw AI power into reliable value.

- Guardrails protect authenticity. Suggest, never auto-commit. Log everything. Keep the human in the loop.

- UX makes agency visible. Dashboards, explainers, override controls—the PO needs to see, understand, and act.

- AI structures; the human decides. This is not a slogan. It is the architectural principle of every responsible AI product.

The FilósofoTech Principle

Technology scales structure. Only the human scales meaning.

The PO who masters the harness does not compete with AI—they command it.

Not through control, but through vital reason: the situated, authentic, irreplaceable judgment that no algorithm can replicate.

🔄 Scaling the Harness

This is a minimal example. In production, you can expand the harness to include:

| Tool | Purpose |

|---|---|

| Jira / Linear API | Fetch real backlog items |

| Metrics Dashboard | Ground priorities in real data (engagement, revenue, NPS) |

| Discord / Slack Bot | Collect async team feedback |

| Email Integration | Send priority summaries to stakeholders |

| Presentation Export | Auto-generate slides with rationale |

| CRM (Salesforce) | Enrich items with customer impact data |

| Git Repository | Check technical feasibility and tech debt |

9. GitHub Project

This article has a companion project with working code and examples:

👉 agentic-harness-backlog-prioritization

Clone it, run the script, and experiment with your backlog:

git clone https://github.com/rivolela/agentic-harness-backlog-prioritization.git

cd agentic-harness-backlog-prioritization

python prioritize.py

Star ⭐ the repo if you find it useful!

👳🏽♂️ Final Mantra

You are not a backlog operator.

You are the architect of product agency.

Own the harness, command the meaning. Technology is structure; product sense is yours.